Nói thật là ngáo luôn, vì mình chọn ảnh xong, edit ảnh thì không hiểu làm thế nào để ‘đi tiếp’.

Đặc biệt là khi dùng màn hình 32″

Mãi lúc sau mới tìm ra nút ở bên trái 😀

IA, UX, UI, User Experiences, Usability, Web Design, Information Architect, HCI

Các bài blog bình thường không chuyên sâu theo chủ đề nào thì.. quăng vào đây :)

Trong bài trước, mình đã giới thiệu sơ qua về ‘xu hướng’ CX và những khó khăn mà bạn cần nhận ra khi muốn làm CX cho một công ty nào đó (dù là công ty lớn hay công ty nhỏ). Rất nhiều ‘chuyên gia’ mở các khoá đào tạo về CX và tư vấn cho các tập đoàn, doanh nghiệp làm CX, cho rằng cần bắt đầu từ chiến lược. Nghĩa là nếu doanh nghiệp muốn cải thiện và làm CX tốt, phải có chiến lược lấy khách hàng và trải nghiệm khách hàng làm trung tâm, rồi sau đó các sản phẩm, dịch vụ, cũng như các hoạt động của doanh nghiệp phải xoay quanh, tối ưu và phục vụ cái ‘trung tâm’ đó.

Nghe rất thuyết phục đúng không?

Đúng quá rồi chứ còn gì nữa. Công ty nào cũng phải có chiến lược tập trung vào khách hàng và nâng cao trải nghiệm khách hàng thì mới giữ được khách hàng trung thành. Giống như con người chúng ta có sức khoẻ thì có tất cả. OK, vậy chiến lược đó làm như thế nào?

Nói về chiến lược và chiến lược CX

Có thể bạn sẽ thấy buồn cười, vì bây giờ còn hỏi chiến lược CX làm như thế nào? Tại sao không lên Google và tìm kiếm ‘CXPA customer experience framework‘ là ra cả một đống nội dung, hoặc tìm với cụm từ khoá ‘customer experience strategy‘ cũng sẽ có nhiều kết quả. Và phần lớn những sơ đồ bạn tìm thấy sẽ có nội dung theo cấu trúc như sau:

Rất tiếc, mô hình trên là process hoặc framework, không phải chiến lược. Bước đầu tiên trong mô hình đóv mới chính là làm chiến lược (aka define / align). Dù mô hình này của CXPA hiện nay hơi cũ, nhưng có thể sử dụng một phần và hiểu rằng ở giai đoạn làm chiến lược, người làm CX cần rà soát lại branding, internal / external communication, brand promise, v.v.. Nôm na là xem lại cái doanh nghiệp này:

Vậy đây là việc của mấy thanh niên làm CX ư? Không. Đó là việc của team branding, bạn chỉ rà soát lại xem hiện trạng mà thôi. Ai là người quyết định về việc branding sẽ như thế nào? brand promise sẽ ra sao? có phải CXO hoặc CX manager không? Không. Đó là việc của brand manager. Bạn chỉ tham khảo hoặc tư vấn thêm mà thôi, vì cơ bản nếu bạn học thiết kế trải nghiệm, hoặc từ ngành khác chuyển qua CX, bạn không có chuyên môn về branding để làm việc đó. Nếu bạn là dân làm branding, vậy thì bạn có thể tham gia công việc này. Nhưng, đó là việc của branding team, không phải việc của CX team. Thậm chí, tất cả những việc như đo đạc nhân thức về branding, phản hồi về brand promise, brand advocate, brand trust… đều thuộc phạm vi công việc của branding team, hoặc trong một số doanh nghiệp, đó là team marketing & branding nói chung.

Bạn có thể phản biện, việc của CX team là ‘align’ lại hiện trạng rồi đưa ra chiến lược làm trải nghiệm khách hàng tốt hơn. Cũng có thể hiểu như vậy, nhưng bạn không phải là người quyết định doanh nghiệp sẽ ‘hứa’ cái gì cũng như sẽ ‘truyền thông lời hứa ra sao’. Team branding sẽ phải chịu trách nhiệm với việc làm branding của họ, còn bạn, bạn có muốn nhận việc branding và chịu trách nhiệm về toàn bộ các KPI của branding không? Cá nhân mình chưa thấy người làm CX nào dám đứng ra nhận KPI này.

Vậy chiến lược là gì?

Nếu bạn từng học hoặc từng đọc về business strategy hoặc corporate strategy thì bạn sẽ biết chiến lược thường có 2 cách tiếp cận.

Tiếp cận theo chiều dọc

Tiếp cận theo hướng phổ quát thì như sau:

Ngoài ra có nhiều chiến lược nhỏ hơn (hoặc đôi khi gọi là chiến thuật) mà bạn có thể tìm hiểu tại Wiki ở đây hoặc tìm đọc cuốn Corporate Strategy của ông Michael Porter (mình chỉ đọc 1 cuốn cơ bản trong bộ sách của ông trùm chiến lược này :D)

Người lựa chọn chiến lược nào cho doanh nghiệp chính là CEO và các C-level khác như CMO, CFO, Brand director… và nếu có CXO thì người làm vị trí này cũng chỉ tham vấn sao cho các chiến lược mà board manager lựa chọn thực hiện được những gì mà brand đã hứa với khách hàng mà thôi. Người làm CX không chịu trách nhiệm về KPI kết quả kinh doanh của công ty, thực ra là không đủ khả năng & năng lực chịu trách nhiệm.

Vậy, CX làm chiến lược được không? CX có thể tham gia cùng các phòng ban khác để bổ sung ý tưởng, tư duy và giúp cho chiến lược của các phòng ban khác ‘phù hợp với tinh thần, và tư duy‘ lấy khách hàng và trải nghiệm khách hàng làm trọng tâm. Chấm hết.

Người chịu trách nhiệm về KPI sẽ là người lựa chọn cách hành động, CX cũng giống như Agile, sẽ giúp các đơn vị khác hành động tốt hơn, hiệu quả hơn nếu như các đơn vị đó chấp nhận (adopt) tư duy CX.

Câu này mình tự nghĩ ra theo tư duy Agile 🙂

Chiến lược không làm được, branding không làm được, thế CX làm gì?

Đầu tiên, bạn cần xoá bỏ những ngộ nhận về ‘quyền lực’ của CX mà hãy trả lời câu hỏi: Deliverables của CX là gì? Nếu như marketing là tạo ra leads, branding tạo ra nhận thức thương hiệu thông qua bộ nhận diện thương hiệu và các hoạt động liên quan, sale tạo ra khách hàng, team sản phẩm tạo ra các sản phẩm mới (digital hoặc physical), team customer service tạo ra tỷ lệ hài lòng và retention, team finance tạo ra bảng cân đối và kiểm soát P&L, v.v…. CX sẽ tạo ra cái gì hàng ngày, hàng tuần cho doanh nghiệp?

Bạn có thể biện luận như sau:

Nếu bạn nhìn lại sơ đồ CX Framework của CXPA, phần ‘key elements’ bạn sẽ thấy, toàn bộ các công cụ đó đều thuộc chuyên môn của các team khác trong doanh nghiệp / tổ chức, và họ có chuyên môn sâu hơn (trong lĩnh vực mà họ dành cả đời để theo đuổi), giỏi hơn bạn, một người làm CX.

Xét cho cùng, CX không có công cụ để thực thi trực tiếp (indirect execution) cũng như không có gì để delivery (invisible deliverables). Ở đây mình không nói tới delivery báo cáo hàng ngày và thống kê số liệu hàng ngày. Đó là hoạt động mà phòng ban đội nhóm nào cũng phải làm. Delivery ở đây nên hiểu là là một việc cụ thể trong cỗ máy vận hành doanh nghiệp.

Trải nghiệm biến chuyển từng ngày mà làm dịch vụ đưa thành quy trình xử lý là thua. Nếu không đưa thành quy trình xử lý thì không scale được. Nếu đưa thành ý niệm tinh thần khi triển khai để flexible quy trình thì tốn kém do lạm dụng và không quản lý được.

Tony Le 😀 (mình gài nó nói câu này)

Như vậy, do không có đủ công cụ cũng như đội ngũ để thực thi trực tiếp, và nếu có mượn người của đơn vị khác trong doanh nghiệp thì lại không có năng lực quản lý chuyên môn đội ngũ đó, không có năng lực lẫn kinh nghiệm chuyên môn thuộc mảng đó (ví dụ Marketing) nên dẫn tới không dám cam kết KPI với BOM (board of manager / CEO). Có trường hợp ngoại lệ không? Có thể, và người đó chính là CEO giỏi CX và giỏi kinh doanh.

Một rủi rõ nữa với người làm CX, hoặc Agile coach đó là: Họ là những người cống hiến gián tiếp cho doanh nghiệp (indirect contributor). Vậy nên khi cần cắt giảm chi phí, những ‘ông’ thuộc diện indirect contributor sẽ bị cắt giảm trước tiên, hoặc cắt hợp đồng tư vấn đối tác CX. Ngược lại, những người là direct contributor như sale, lập trình viên,… sẽ được giữ lại, vì những người này sẽ duy trì cash flow cho công ty.

Vậy CX vô dụng và… vứt đi àh? Không, CX, cũng giống như UX, Agile, đều có giá trị riêng của nó tại mỗi thời điểm nhất định đối với độ trường thành của doanh nghiệp (maturity of business).

Tóm lại, làm CX nên bắt đầu như thế nào?

Mình viết giông dài như vậy cốt để bạn hiểu đúng về bản chẩt của CX. Có thể tạm coi như CX là một lĩnh vực phụ trợ cho doanh nghiệp. CX quan trọng như việc một con người cần tập luyện thể dục, sống lành mạnh và uống bổ sung vitamin khi cần. Nếu ở góc độ tôn giáo, người làm CX có thể hiểu như một thày tu, một nhà truyền đạo đi khắp nơi (khắp các doanh nghiệp) để truyền bá, đào tạo tư tưởng CX cho mọi người, qua đó, nâng chất lượng trải nghiệm của doanh nghiệp lên tốt hơn.

Hãy nhìn vào Agile để có một ví dụ và tầm nhìn sáng suốt hơn về CX. Agile được những người làm phần mềm máy tính (IT Software) lâu năm, tụ họp lại để đưa ra cách làm phần mềm tốt hơn. Agile không phải là qui trình (process), mà nó là một nền tảng các phương thức thực hiện (framework) và tuỳ vào hoàn cảnh của doanh nghiệp, trình độ của đội ngũ mà bạn có thể chọn sử dụng những công cụ của Agile như XP, Kanban, Scrum, Lean, v.v… Hiện tại, UX cũng đã áp dụng được Lean và Scrum (UX debt, UX backlog.v.v..) và CX cũng hoàn toàn có thể áp dụng như vậy.

Có một thực tế là, Agile ra đời từ rất lâu rồi, khoảng hơn 20 năm trước, nhưng cũng phải trải qua cả thập kỷ, tư duy (mindset) này cũng như các công cụ của nó mới được đón nhận và sử dụng rộng rãi trong các công ty phần mềm, các nhóm phát triển sản phẩm phần mềm, sản phẩm số. Lean cũng cần một thời gian tương tự. Scrum cũng thế. * Nếu bạn không thành thạo về Agile thì mình khuyên bạn nên tìm hiểu và đọc qua. Theo thời gian, Agile dần hình thành nhiều công cụ, nhiều chỉ số đo đạc riêng và thậm chí hình thành các quá trình áp dụng trong doanh nghiệp. Điển hình chính là ‘adoption‘, nghĩa là doanh nghiệp cần có thời gian để ‘chấp nhận’ việc áp dụng Agile. Một trong những phương pháp (methodlogy) của Agile đó là Scrum đang được ứng dụng rất rộng rãi hiện nay. Tuy nhiên, để triển khai Scrum (ý tưởng và tư duy phát triển từ môn bóng bầu dục), doanh nghiệp cũng cần có các vị trí Agile coach, Scrum master,.v.v… Triển khai được rồi, đến giai đoạn scale cho Agile lại có nhiều công cụ và nhiều khó khăn đi kèm nữa.

Agile hay Scrum cũng không thể thành công một mình nếu như Thế Giới phần mềm (IT Software industry) không tiến hoá và phổ cập những thứ như CI/CD, Git flow, Automation testing, v.v… Điều đó cho thấy, Agile tuy là một mindset (tư duy), nhưng không phải công ty nào cũng áp dụng được, không phải nói là làm ngay được (dù Agile có thể trial với nhóm 5-7 người, và nhìn ra kết quả sau vài sprints). Sau cùng, deliverables của Agile chính là shipping software. Vì thế, phạm vi thực thi và triển khai Agile (với 22 năm phát triển) vẫn nhỏ hơn CX rất nhiều, và Agile contributes trực tiếp tới team software development.

CX, có lẽ, cũng nên bắt đầu như Agile, nên xem như là một định hướng tư duy bao trùm lên doanh nghiệp, và nên lựa chọn cách tiếp cận phù hợp với chiến lược kinh doanh của doanh nghiệp đó, cũng như khả năng, trình độ của đội ngũ mà doanh nghiệp đang có. Agile hay CX không phải sản phẩm có thể thực hiện đại trà như sản xuất công nghiệp, không phải bộ qui trình có thể đem ra áp dụng đồng loạt, trái lại, đây là những thứ cần phải ‘may đo‘ cho phù hợp với doanh nghiệp cần đến chúng.

Nếu bạn đã từng làm qua UX hay CX, bạn sẽ thấy quá trình làm việc của mình bị ‘gãy‘ ngay sau khi bạn làm xong khâu ‘research’ (nghiên cứu) hoặc khai thác insights trong kết quả nghiên cứu, còn gọi là empathy. Bạn sẽ không biết làm thế nào tiếp theo ngoài việc đưa ra một danh sách những ý tưởng cải tiến sản phẩm, dịch vụ và qui trình để test các ý tưởng đó. UX thì linh hoạt hơn vì bạn có thể fake prototype để test ý tưởng của bạn, nhưng mặc dù vậy, khi team product làm ra sản phẩm thực sự, nó vẫn khác rất nhiều so với prototype mà bạn đã làm. Chính vì thế ‘try and fail’ là qui trình bạn buộc phải đi qua.

Kỹ năng quan trọng nhất của người làm CX?

CX nhiều điểm chạm hơn (touch points), và dính đến qui trình vận hành, cũng như các qui trình, chiến lược của các bộ phận khác trong một doanh nghiệp. Thêm nữa, do CX không có đội ngũ và công cụ để thực thi trực tiếp và nhiều như các phòng ban khác, nên công việc chính của người làm CX chính là truyền đạt tư duy CX và phối hợp với các đơn vị khác thử nghiệm. Thế nào là phối hợp? Thực ra, đây chính là công việc thuyết phục các đơn vị khác cùng làm với mình, cùng triển khai ý tưởng CX lồng ghép vào trong hoạt động và kế hoạch của họ, cùng họ đề ra các mục tiêu đo đạc và chia sẻ trách nhiệm. Suy cho cùng, CXPA có liệt ra một mớ các kỹ năng để mọi người thi chứng chỉ, nhưng 2 kỹ năng (mình sẽ nói sâu vào một bài viết khác) quan trọng nhất mình thấy hữu dụng trong sự nghiệp đó là:

Người làm CX sẽ phải đi ‘sale’ ý tưởng và công việc của mình, vị trí và tư duy của mình với các phòng ban khác và phải chứng minh nó có hiệu quả được công nhận. Ví dụ chỉ số retention hoặc re-order đơn hàng tăng, bạn sẽ nhận đó là công trạng của CX hay do sự hiệu quả của marketing & promotion & ads? Bạn tạo ra một điều bất ngờ cho khách hàng (make a wow), là do sự sáng tạo của team marketing hay team IT big data? v.v…. và v.v…

Sau cùng, UX, cũng như Agile hay CX, không phải là chiếc chìa khoá vàng cho mọi doanh nghiệp. Có những phân khúc khách hàng khác nhau, những thị trường khác nhau vẫn bị ảnh hưởng bởi các yếu tố hành vi khác nhau mà tôi đã tóm tắt trong bài số 1.

Bài sau mình sẽ viết thêm và một số cách tiếp cận CX mà mình đã áp dụng cũng như trải nghiệm trong nhiều năm qua.

Thanks,

Germany 8/2022

Tham khảo

Chiến lược 🙂

CX framework

Tính đến hôm nay, gần 10 năm mình gắn bó với UX, rồi lan man sang UI và CX, nhưng thực sự những dự án thành công từ UX đến CX trên phương diện ‘đạt KPI’ chỉ đếm trên đầu ngón tay. Vậy tại sao UX, và mở rộng hơn là CX lại ‘hot’ ? Tại sao rất nhièu người nói về nó, nhưng chưa ai tạo ra công thức thành công? Tại sao người dạy thì nhiều mà người làm thật thì ít? Trong bài này, mình hy vọng sẽ trả lời phần nào câu hỏi đó.

UX vs CX

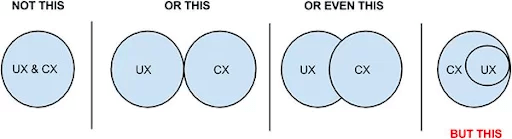

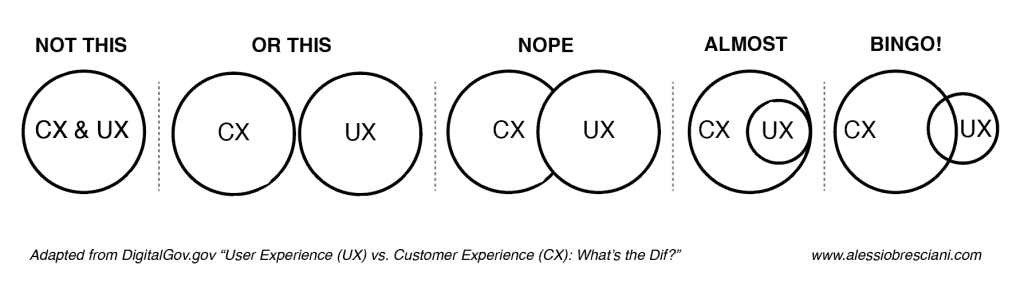

Đầu tiên, hãy xem xét cách giải thích bằng mô hình mà bạn có thể dễ tìm thấy trên mạng

Có thể thấy, đa phần mọi người gói gọn CX là ‘tất cả’ các trải nghiệm của một người đối với một công ty (một thương hiệu kinh doanh) và trong đó sẽ bao gồm cả UX. 90% có thể nói như vậy, nhưng nếu đứng ở góc độ một sản phẩm phi lợi nhuận, một sản phẩm miễn phí, thì UX lại phần nào tách rời. Thêm nữa, nếu doanh nghiệp thuộc dạng B2B và cung cấp dịch vụ như tư vấn, nghiên cứu khảo sát… thì UX lại càng ít liên quan. (Ở đây bạn có thể phản biện là tài liệu tư vấn bàn giao cũng cần có UX như IA, UX writing..v.v…. ) nhưng thực tế mà nói hàm lượng UX là rất ít.

CX dễ nói, dễ bàn, tại sao?

Hãy hình dung như thế này, khi nói về CX đối vơi doanh nghiệp, cũng giống như nói về sức khoẻ đối với con người: Sức khoẻ quan trọng không? Có, không ai có thể phủ nhận, vì có sức khoẻ là có tất cả,. Tương tự như vậy, khách hàng trung thành có quan trọng với doanh nghiệp không? Có, đương nhiên rồi, không ai có thể phủ nhận. Chính vì vậy, khi thuyết phục một ai đó về tầm quan trọng của CX, người ta thường dùng kỹ thuật ‘1 bước chân lên thềm cửa’ và ‘dựa vào điều hiển nhiên’ để khiến người nghe không thể phủ nhận CX. Sau đó, là các vấn đề được nêu ra cần làm như CRM, CJM, NPS, v.v…

Và thế là người người đổ xô đi học CX, đi dạy CX, đi tư vấn CX, đi bán các giải pháp gọi là CX Platform, mà nôm na là sự kết hợp của các thể loại tool & toys như CRM, Social listening, digital marketing, customer service automation software, analytics tool v.v…

Vậy bản chất CX là gì?

Khác với các ngành khoa học cơ bản, hoặc những ngành đã tồn tại lâu năm và hình thành nhiều lý thuyết phổ quát cũng như phương pháp luận vững chắc, CX ở thời điểm hiện tại, nên hiểu là một tư duy (aka ‘Mindset’). Giống như cuộc sống của bạn, sống quan tâm tới sức khoẻ, sống lành mạnh là một tư duy trong cuộc sống, và hình thành lối sống sau thời gian dài luyện tập sống theo tư duy mà bạn theo đuổi. Để sống khoẻ mạnh, bạn cần ‘chiến đấu’ với bản thân mình để vượt qua ‘cám dỗ’ như đồ uống có cồn, thức khuya, cày phim / truyện giết thời gian, tập thể dục đều đặn, ăn và uống đò sạch và phụ hợp với thể tạng, v.v… Mà đó mới là phần ‘vật lý’ của cơ thể. Để khoẻ mạnh về tâm hồn, bạn cần tập thiền, cần đọc sách, cần tập suy nghĩ và tập kiểm soát cảm xúc.v.v… trong ‘nhiều năm’.

CX là một tư duy mang lại trải nghiệm tốt cho khách hàng, khiến cho họ luôn có ký ức tốt và hài lòng với sản phẩm, dịch vụ của công ty bạn. Để làm được điều đó, bạn cũng phải ‘chiến đấu’ hàng ngày với các khó khăn, cám dỗ (vd giữa khách hàng trả bạn 1 tỷ và khách hàng trả bạn 100 triệu cho cùng 1 dự án), và những mâu thuẫn của bản thân bạn hoặc của chính doanh nghiệp. (Nên nhớ rằng: doanh nghiệp nào cũng có mâu thuẫn bên trong lẫn bên ngoài và Thế Giới có hẳn ngành riêng gọi là Organization Conflict Management)

Ở một góc nhìn khác, CX hay UX đều có chữ X, lấy ra từ từ tiếng Anh ‘eXperience’, nghĩa là trải nghiệm, và thực tế là ‘rất nhiều người’ ở Việt Nam chưa hiểu đúng, chưa hiểu sâu về trải nghiệm của con người. Trải nghiệm là một khái niệm có phạm vi nghiên cứu rộng, và đa chiều. Ở góc độ ‘vật lý’ thì có thể hiểu nó bao gồm đủ 5 giác quan chính của con người: nghe, nhìn, nếm, ngửi, và tiếp xúc. Ở góc độ ‘khoa học nhận thức’ sẽ bao gồm cảm xúc, trí nhớ, nhận thức (bao gồm cả nhận thức thị giác, và nhận thức não bộ thấu hiểu). Ở góc độ ‘khoa học hành vi’ sẽ bao gồm yếu tố quan trọng là ‘nhân chủng học’ và ‘văn hoá’, ‘đặc tính giống loài’. Còn vài góc độ khác nữa, nhưng liệt kê như vậy là đủ rồi. Có thể bạn chưa biết đến những phạm vi này, còn mình đã trăn trở và nghiên cứu về ‘trải nghiệm’ 10 năm rồi, và đã từng thấy nhiều lý thuyết trải nghiệm trong sách ‘không đúng’ trong thực tế… mãi sau này khi mở rộng phạm vi nghiên cứu mình mới thấy nguyên nhân là vì có quá nhiều sự tác động khác nhau. (Gần đây có khái niệm ‘behavior design’ và ‘intervention design’ để tập trung vào can thiệp / chèo lái hành vi)

Cũng giống như ‘tư duy’ sống lành mạnh và có sức khoẻ tốt cần rất nhiều sự thay đổi, rèn luyện và đấu tranh, CX cũng là một tư duy và cần nhiều thứ như thế. Giống như cuộc sống có quá nhiều thứ tác động tới con người, CX cũng vậy, và ở phạm vi doanh nghiệp, CX tác động tới quá nhiều người.

Tại sao CX khó làm?

Ở phần trên mình đã liệt kê các yếu tố tác động tới trải nghiệm để bạn có thể hình dung tại sao thiết kế trải nghiệm khó, dù là trải nghiệm cho khách hàng (CX), hay cho người dùng sản phẩm (UX). Để giải thích rõ hơn, mình sẽ đi qua vài phạm vi đã liệt kê.

Ở góc độ ‘vật lý’, khi bạn gọi điện đến một công ty nào đó, dù người nghe điện thoại có ‘giải quyết’ được vấn đề của bạn hay không, dù người đó tìm mọi cách giúp bạn hay chỉ làm theo qui trình, thì cảm nhận của bạn khi nghe giọng nói đó vẫn là trải nghiệm đi vào não bộ đầu tiên. Người Nghệ An, Huế, Hà Nội, Tp HCM, v.v…. khi nghe giọng nói khác với giọng của mình sẽ nghe được bao nhiêu % ? sẽ thoải mái với âm thanh đó bao nhiêu % ? giọng nói của người nhân viên nghe điện thoại có tone cao hay trầm ấm, gay ghắt hay nhẹ nhàng… Âm thanh bạn nghe được lúc đó, chính là điểm chạm ‘touch point’ chứ không phải người nhân viên đó là điểm chạm 🙂

Ở góc độ ‘khoa học nhận thức’, khi người nhân viên nghe điện thoại giải thích cho bạn ‘qui trình’ và ‘vấn đề của bạn’, ví dụ về vấn đề thẻ tín dụng của bạn bỗng nhiên bị khoá, bạn cho rằng trải nghiệm tốt là khi người nhân viên ngân hàng xử lý ngay giúp bạn sau khi hỏi vài thông tin cơ bản? Và nếu nhân viên yêu cầu bạn làm theo qui trình của ngân hàng, bạn sẽ chửi đổng lên? Sau đó bạn sang ngân hàng khác và tin rằng dịch vụ sẽ tốt hơn. Bây giờ hãy suy nghĩ linh hoạt thế này: Bạn làm trong ngành ngân hàng 10 năm, hoặc bạn làm về thương mại điện tử 5 năm, hoặc bạn làm thanh toán quốc tế 8 năm, hoặc bạn làm quản trị rủi ro 10 năm, v.v… Nói tóm lại, bạn có kiến thức và có nhận thức cao hơn người ngoài ngành (người bình thường) về bảo mật và an ninh tài chính. Liệu bạn muốn người nhân viên ngân hàng kia làm theo qui trình hay tự ý tìm cách giải quyết theo cách riêng? Học vấn và kinh nghiệm làm thay đổi nhận thức, nhận thức khác nhau, nhu cầu trải nghiệm khác nhau.

Ngoài ra, nếu công ty bạn kinh doanh thương mại và có 10-20 nhân viên trực điện thoại, bạn ngồi cạnh họ hàng ngày để đảm bảo qui trình đơn giản (tối đa 5 bước) và nhân viên của bạn luôn cố gắng giải quyết vấn đề cho khách hàng ngay trong lần đầu tiên nghe điện thoại với tỷ lệ 90% (từ chuyên môn gọi là DIRFT: Do it right first time). Vậy bạn sẽ làm thế nào khi bạn có 20.000 nhân viên, 300.000 khách hàng và có mặt ở 3 quốc gia? Lúc này bạn sẽ thấy, Scaling trải nghiệm không còn là vấn đề của tương tác đơn thuần nữa (Touch point experience is not a golden key). Mình sẽ viết về case study Citibank cho trường hợp này vào một bài khác 🙂

Sau cùng, ở góc độ ‘khoa học hành vi‘, một trong những góc độ khó kiểm soát nhất và cũng thú vị nhất, bạn sẽ thấy trước giờ mình hiểu về ‘trải nghiệm’ một cách quá đơn thuần (và ngây ngô). Khi học về Consumer Behavior tại trường Essex Univeristy, mình có tổng hợp lại 12 phạm vi ảnh hưởng tới hành vi của khách hàng. Thực ra, ngành Marketing đã nghiên cứu hàng trăm năm rồi chứ không phải mới phát minh ra. (UX hay CX, phần lớn, cũng chỉ đi vay mượn lý thuyết từ các ngành khoa học khác mà thôi). Thay vì nói sâu vào lý thuyết, bạn hãy tự trả lời mấy câu hỏi sau:

Tại sao Apple và iPhone không thống trị Thế Giới mà ngược lại doanh số bán smartphone của Samsung cũng như lượng điện thoại Android bán ra vẫn cao hơn?

Tại sao Windows vẫn tồn tại song song với MacOS? Và cả CentOS, Linux, v.v…

Tại sao bạn không uống cà phê tại một quán cố định cả đời bạn? Giả sử quán đó tốt 100% về trải nghiệm khách hàng.

Tại sao có những band nhạc, bài hát, dòng nhạc chỉ tồn tại một thời gian rồi thoái trào hoặc chỉ còn một lượng nhỏ khán giả?

Tại sao các game mới vẫn ra đời hàng năm?

Tại sao bộ phim truyền hình hoặc phim điện ảnh vẫn sản xuất mới hàng năm?

Tại sao bạn biết có tiệm cắt tóc cực kỳ đẹp và trải nghiệm khách hàng tốt 100% nhưng bạn vẫn cắt ở tiệm gần nhà?

Tại sao bạn đã có một cái đồng hồ, một lọ nước hoa của thương hiệu bạn yêu thích, bạn trung thành nhưng bạn vẫn mua 10-20 lọ nước hoa của hãng khác, 10 đồng hồ của hãng khác?

CX hay UX không trả lời được những câu hỏi này. Chỉ có những ngành khoa học cơ bản với bề dày nghiên cứu mới có đáp án. Đó là những ngành như Consumer Behavioral, Cognitive Science, Business Psychology, Behavioral Psychology, Human Factor, v.v… Đó là những thứ liên quan tới nhu cầu của con người và đặc tính của con người theo từng giống loài và văn hoá của giống loài đó. Đó là những thứ tác động lên con người trong một hoàn cảnh cụ thể, chuyên môn gọi là power of context, need intervention factors.

Ngày đầu tiên khi mình học Human factor trong khoá học cao học, giáo sư nói một câu huỵch toẹt:

Loài người là giống loài kém trung thành nhất

Và sự thực là: Con người, không trung thành với trải nghiệm cùng thương hiệu hay doanh nghiệp nào cả mà chỉ trung thành với nhu cầu và bản tính của mình mà thôi. Điều này không quá xấu, bởi với sự tò mò và khao khát trải nghiệm những điều mới mẻ, con người ta mới đi khám phá ra các châu lục mới, mới tìm mọi cách bay ra ngoài trái đất để khám phá vùng đất mới. Có những người dù có bao nhiêu quần áo đi nữa, vẫn đi shopping và thử rồi mua sắm ở những cửa hàng mới, thương hiệu mới. Và đó là điều bình thường mà bạn nên chấp nhận để tiếp cận sâu hơn với lĩnh vực thiết kế trải nghiệm.

Khi bạn trả lời được tất cả các câu hỏi trên một cách khách quan, bạn sẽ dần hiểu rõ về trải nghiệm và thiết kế trải nghiệm, và rồi tìm cho mình hướng tiếp cận để thực hành đúng đắn.

Phần sau, mình sẽ nói về việc khi có tư duy CX rồi, thì cách tiếp cận sẽ như thế nào.

Germany, 07/2022

Binh Truong

Over the past five years, we have seen the world with multiple innovative ideas regarding interaction and user interface. People around the globe are now familiar with the concept of AR, VR, voice command, gesture, and so forth. With the rise of AI (Artificial Intelligence), the application and devices are more and more intelligent with the capability of human needs and behavior prediction. Consequently, in this article, I will shape the potential user characteristics and the interaction style in the next decade.

The portrait of the future users

To begin with the shape of the future users, I used to look at my children as he’s six years old now. How will he be in the next ten years when he’s sixteen? In fact, nowadays, children from six to eight years old start using electric devices such as smartphones and tablets and can learn quite fast. The typical user like this generation (my boy in the example) is using multiple senses to interact with the application. They usually use voice, touch, visualization tools, movement in their daily activities. For example, my kid is very familiar with Google Assistant and Amazon Alexa. Hence, I believe they will feel comfortable with voice commands, hands movement commands, and human-robot interaction (HBI) style in the near future.

Moreover, the portrait of the users in the next ten years will broaden from the young generation to 60-70 years old people (as we are getting old from now on). Users will keep doing multi-tasking, even though they can’t do multi-task like a machine, but they still prefer to switch back and forth between the things simultaneously. As a result, the demand for adaptive devices and integrated devices will increase. Users might want to have glasses that allow them to watch the streaming video, listen to music, and answer the phone call using the eye’s movement. The disabled people will also be able to control their robot arms or legs with the brain signal, maybe.

Predicting the interaction style for user’s interfaces

As we can see the users in the future, the application or device’s interface will also need to be improved massively. At this moment, most of the user skips using a computer mouse but use a trackpad or touch screen instead. The more multi-tasking they are, the less able to control the device they could afford for. With that in mind, I would believe the future interaction style will focus on a few critical trends as below.

The voice interface

As modern users get busier, they prefer some interaction style that allows delivering the command freely. Users will turn on or turn off the TV or the air conditioning with just a voice command. The mobile and web applications are now supporting voice search steadily, and they will work smoothly in the coming years. The voice interface is not an innovation in terms of technology development, but it becomes possible to apply with the blooming AI industry.

The robot and automation interaction

Robotics technology is growing in any area of our lives. From medical care to customer support, it becomes a key business strategy because of the quick response to the client. For example, the chatbot has been the big trend in the last three years, with elementary conversation supported. However, in the next ten or twenty years, we will have more than enough data to train the computer with deep learning to make the computer program more powerful and more brilliant. We have a vacuum cleaner, rice cooker, and I believe we can somehow ‘make a full week plan’ for those devices in 2025.

Interaction through human senses

Facebook wanted to patent their way of turning on our camera to track our face feeling silently (3), and it might be popular in the future. Imagine that Netflix, Music app, Travelling application, or even your juice maker machine can be based on your facial feeling today to suggest what you should drink, where you should go, what you’d better watch and listen to.

It is how the world might surround you and serve you with the intent to satisfy you the most.

To conclude, the future interaction style might focus on flexibility and adaptability based on the user’s profile or context. Users can interact with things we design in their most convenient way. Hands will not be the only way to interact, but other senses and movement will also be the input source for the applications.

References

(1) https://uxdesign.cc/the-future-ui-trend-of-2025-14d9fdf6745

(2) https://careerfoundry.com/en/blog/ux-design/whats-the-future-of-ux-design/#how-will-ux-change

(3) https://www.inc.com/minda-zetlin/facebook-patents-spying-smartphone-camera-microphone-privacy.html

#agile #scaleagile

So, today I passed the LinkedIn assessment for the Agile methodologies, thumbs up. However, there are a few things I learned during the way that I want to take note of here.

In Scaled Agile Framework, if you just need to measure one thing, what is it?

Note: If you only want to measure one thing in the Scaled Agile Framework (SAFe®) or other frameworks, yes, it could be “cost of delay” (that is the official version, according to the SAFe® test). This is where I read.

Three key different things between task board and kanban board

This is the article you should read

What is the Enabler’s role in Scaled Agile Framework?

An Enabler supports the activities needed to extend the Architectural Runway to provide future business functionality. These include exploration, architecture, infrastructure, and compliance. Enablers are captured in the various backlogs and occur throughout the Framework. This is further reading.

What are the most common ways to split a story?

Further reading for story splitting is here:

What Happens When A Story is Not Accepted by Product Owner?

Abandon it. In some cases, the story may be abandoned. This is a healthy response of a mature product owner, and it typically happens when the data does not provide the information the product owner was hoping to get. They see that what the team can realistically create based on what’s in the system is not what they or the stakeholders had envisioned.

That’s it so far.

Hope it helps sometimes you read this.

Cheers!